When a Rubric and Evidence Shape the Learning

Slowing Down to Define Understanding

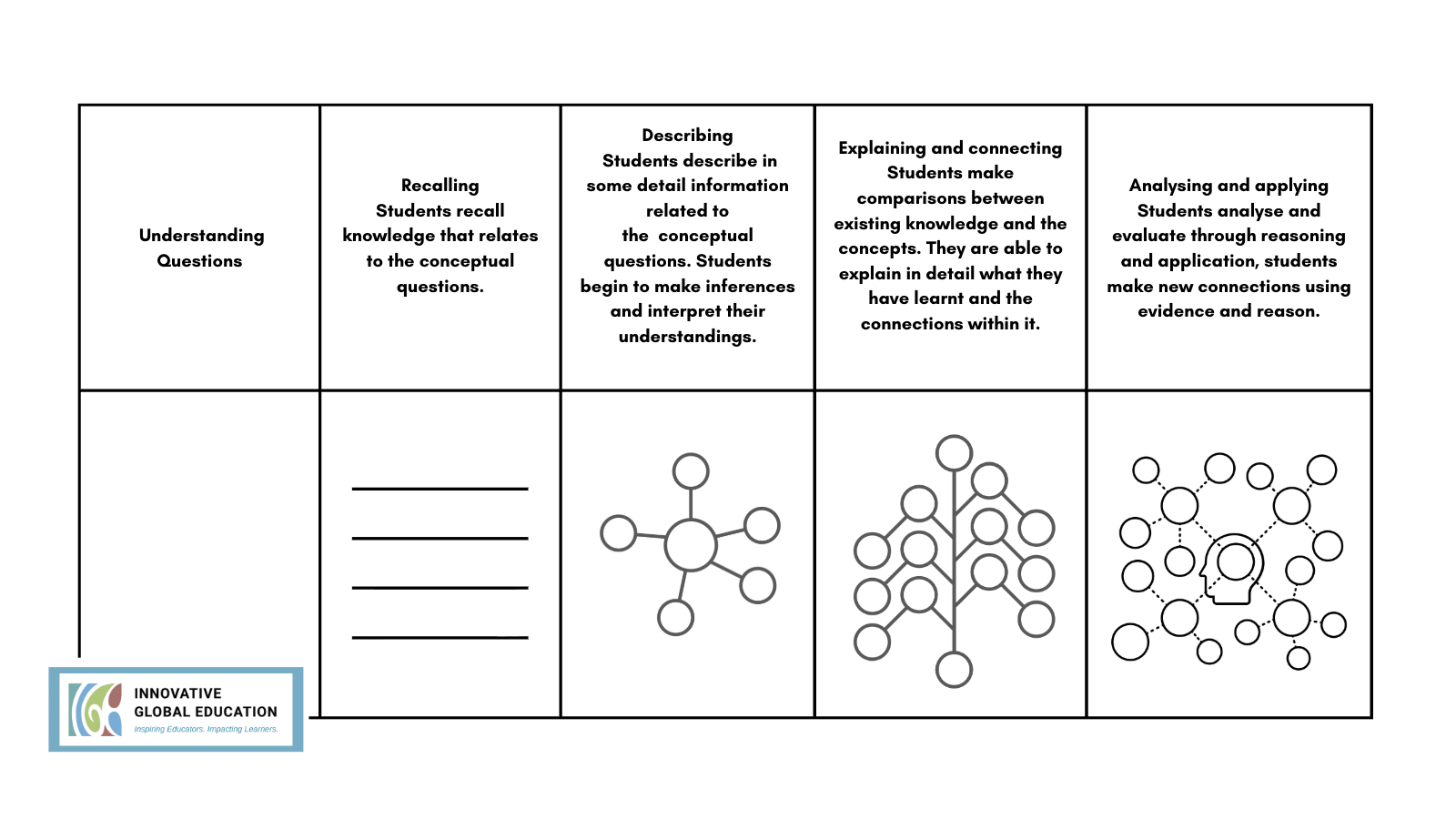

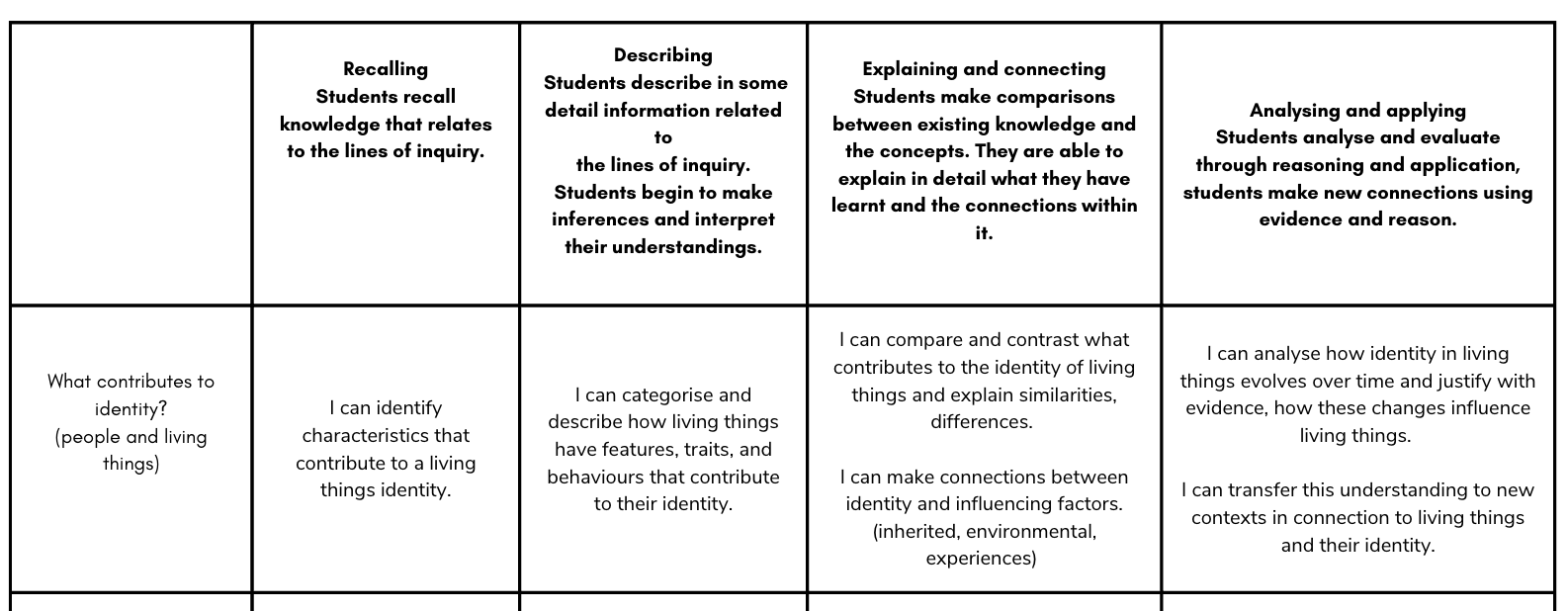

Designing a Rubric of Understanding at the outset required teachers to think differently.

What would emerging understanding sound and look like?

What might partial understanding look like?

What would developing reasoning involve?

What would analytical, transferable understanding look like in action?

These conversations required unpacking the conceptual understanding itself and clarifying the progression of thinking over time, ensuring shared clarity and moderation across the teaching team.

The focus shifted from:

“What are we going to do in this unit?”

to

“How will students’ understanding deepen across this unit?”

From the example above, you can see how understanding develops across the conceptual questions. The rubric is revisited throughout the unit to ensure learning is experienced as a continuous journey rather than a single event.

The rubric stopped being something attached to a task. It became the anchor for the learning journey; a tool for thinking, not scoring, and an opportunity to continually assess and plan in response to students.

Planning to Elicit Evidence: Not Hoping It Appears

The most powerful shift came with the next question:

How do we intentionally design learning to elicit evidence aligned to the rubric and the intended understanding?

Not hope it surfaces.

Not assume it will appear.

But explicitly plan for it.

If a descriptor states that students can explain conceptual relationships, what prompt will require explanation?

If developing understanding involves transfer, what task will demand application in a new context?

If misconceptions are likely, what comparison or contrast will surface them?

Eliciting Evidence in Action

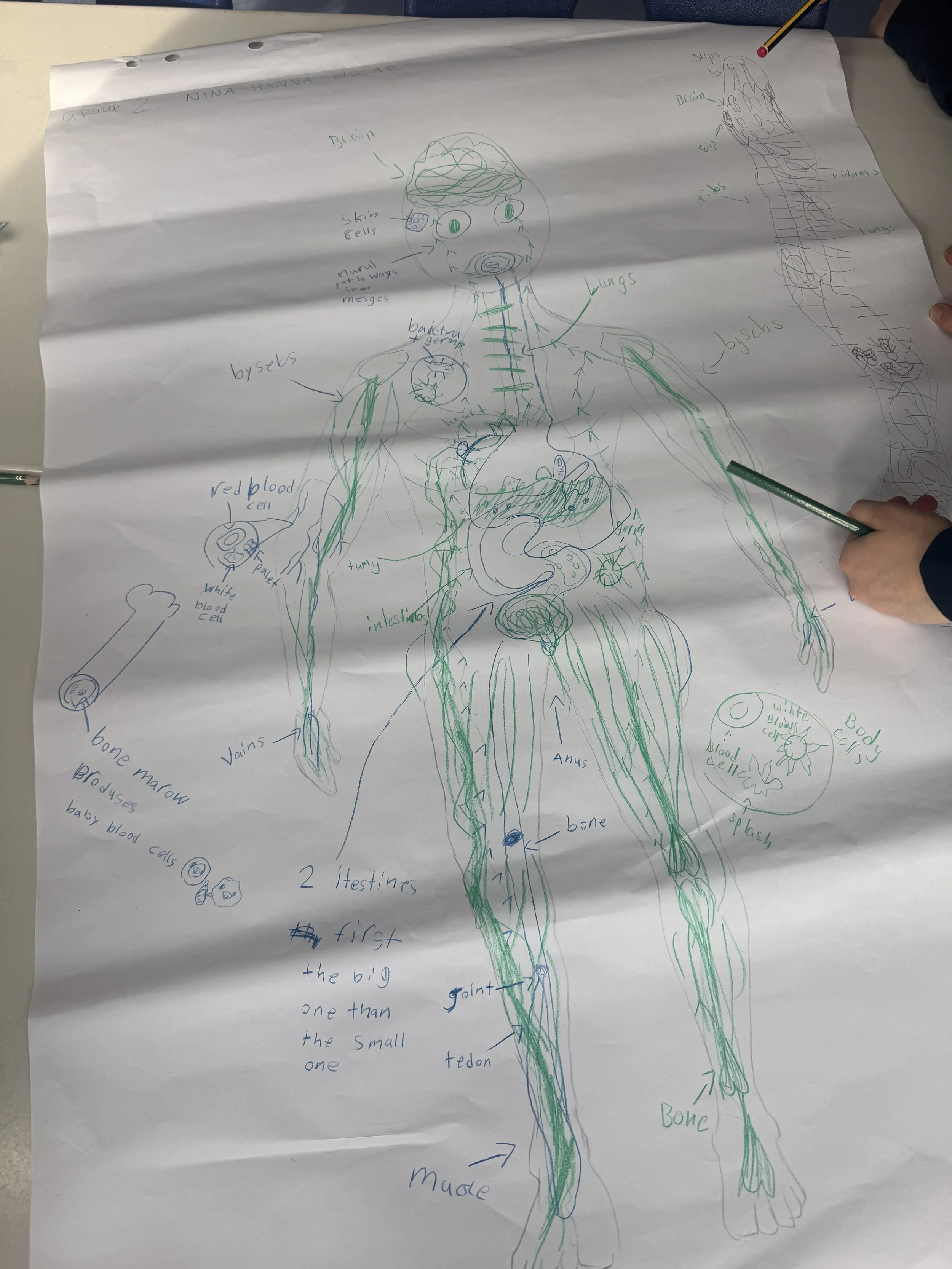

In one classroom at BBIS Berlin Brandenburg International School GmbH, teachers explored the question:

What is the structure and function of body parts and how are they interconnected?

Students were first asked to collaboratively represent their understanding of structures and functions. They then individually demonstrated how those structures were connected and why they mattered.

The engagement was not simply an activity.

It was deliberately designed to surface evidence aligned to the rubric.

Teachers were not looking for completion.

They were listening for reasoning.

They were noticing conceptual connections.

They were identifying misconceptions.

That evidence then informed the next phase of planning.

The rubric becomes more than a description of progression. It becomes a blueprint for evidence.

Lessons are intentionally designed as opportunities to unpack students’ current understanding.

When planning is deliberate about eliciting evidence, misconceptions and emerging understandings surface earlier, ensuring there is time to respond.

Listening Differently in the Classroom

When lessons are crafted to reveal thinking, teachers listen differently.

A student's explanation becomes data.

A written justification reveals conceptual connections.

A classroom discussion surfaces understanding.

A problem-solving strategy exposes reasoning and misconceptions simultaneously.

Instead of asking, “Did they get it?” the question becomes:

“What does this reveal about where their understanding currently sits?”

Planning to elicit evidence positions teachers as researchers of student thinking.

From Evidence to Instructional Decisions

Eliciting evidence only matters if it shapes what happens next.

Eliciting Evidence in Action

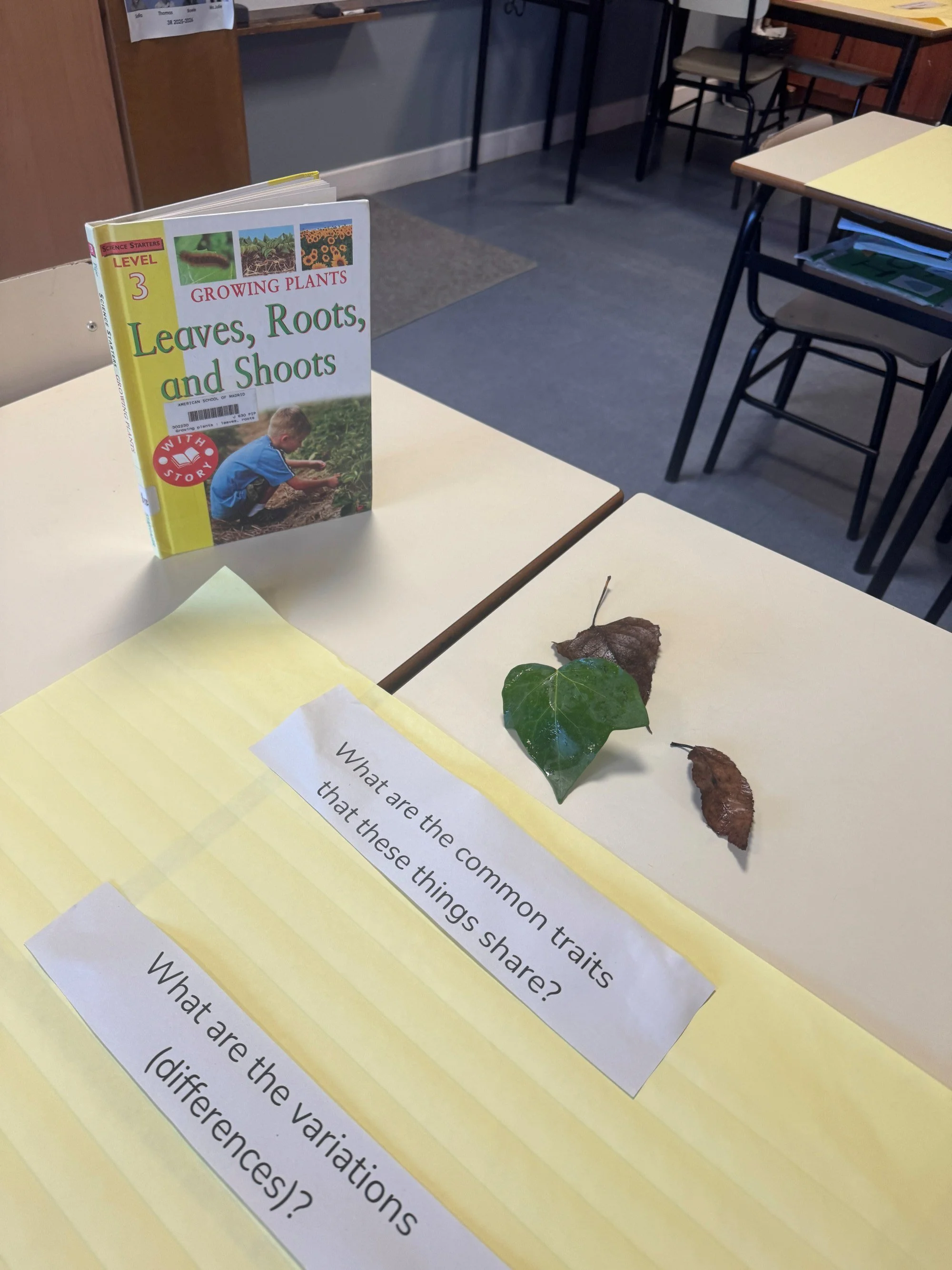

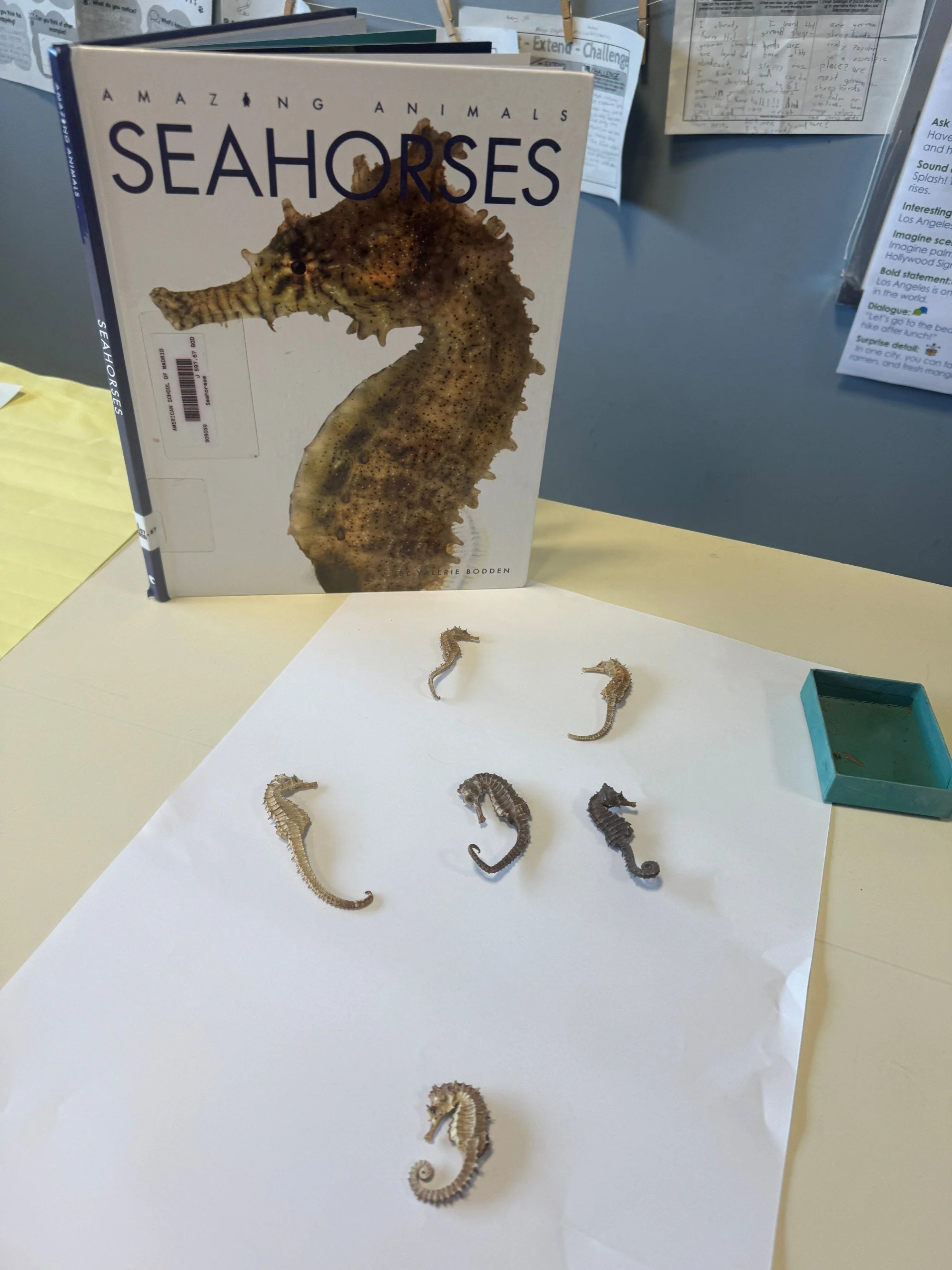

In another context at the American School of Madrid, students explored:

What are variations in living things and what causes them?

Different examples of living organisms — seahorses, leaves, shells, and insects — were placed on tables. Carefully crafted prompts guided the inquiry:

What are the common traits?

What are the variations?

What causes the variations?

The purpose was not classification alone.

It was to unpack students’ current conceptual understanding of variation and causation.

The questions were intentionally aligned to the rubric descriptors so that teachers could analyse where students were positioned along the progression of understanding.

That insight shaped what came next.

If reasoning remained surface-level, the next lesson deepened conceptual connections.

If misconceptions surfaced, instruction paused to address them explicitly.

If transfer began to emerge, opportunities were extended and varied.

The rubric lived in the planning cycle.

Evidence → Interpretation → Instructional Adjustment.

This is where assessment moves from measurement to professional decision-making, from recording learning to shaping it.

Planning becomes iterative rather than fixed. Differentiation becomes informed rather than reactive. Teaching becomes responsive rather than predetermined.

Supporting Students to See Their Own Growth

When students understand the progression of understanding from the beginning, something shifts.

They began to articulate:

“I can explain this, but I can’t yet apply it.”

“I understand the idea, but I’m confusing these two concepts.”

“I can transfer this in one context, but not yet in another.”

Assessment becomes information.

Not judgment.

When the rubric becomes a blueprint for gathering insight and that insight genuinely informs what happens next, assessment no longer interrupts learning.

It drives it.

These reflections continue to reinforce ideas explored in Leveraging Deep Learning: Strategies and Tools for Conceptual Understanding, particularly the positioning of assessment as continuous, responsive, and embedded within learning rather than separate from it.

More information about the book can be found here:

https://elevatebooksedu.com/leveraging-deep-learning

Because when assessment is designed to reveal thinking and used to guide the next move, understanding has space to grow.

If this reflection resonates with your practice, I always welcome continued professional conversation.